Updated April 1, 2026: This article was originally published in September 2025 and has been updated to reflect the current state of both tools.

I was a Cursor agent power user for months. I even wrote the Cursor tips post that thousands of developers reference every week.

Then Claude Code came out. That became my go-to.

And now my go-to has changed, again. I didn't want it to happen, but let me explain why it.

Let's compare the agents, features, pricing and user experience.

Using Codex or Claude Code in your stack? Builder.io is the best harness for either — run parallel agent branches, manage agentic workflows across your repo, and ship PRs without context switching. Works with both. Try it free →

All of these products are converging. Cursor’s latest agent is pretty similar to Claude Code’s latest agents, which is pretty similar to Codex’s agent.

Historically, Cursor set a lot of the foundation. Claude Code made improvements. Cursor copied useful things like to-do lists and better diff formats, and Codex adopted a lot of those too.

Codex is so similar to Claude Code that I honestly wonder if they trained off Claude Code’s outputs as well.

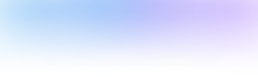

Small behavioral differences I notice:

- Codex tends to reason a bit longer, but its visible tokens-per-second output feels faster.

- Claude Code tends to reason less, but its visible output tokens come a bit slower.

- Inside Cursor, switching models changes the feel along the same lines: GPT-5 Codex models spend longer reasoning, Sonnet spends less time reasoning and more time outputting code, though the code comes out slightly slower, especially if you use Opus.

Ultimately, the agents are comparable. If you prefer Cursor or Claude Code or Codex, I respect it. I have a slight preference for Codex or Claude Code, mostly because the company that builds the tool also trains the models and seems to optimize the end-to-end loop.

Winner: Tie

I have become pretty fond of the GPT-5 Codex model family. Since September, OpenAI has shipped GPT-5-Codex, GPT-5.1-Codex-Max, GPT-5.2-Codex, and now GPT-5.3-Codex — the current model and the fastest yet, 25% quicker than its predecessor, with support for real-time steering mid-task. It has gotten noticeably better at knowing how long to reason for different kinds of tasks. Over-reasoning on basic tasks is annoying.

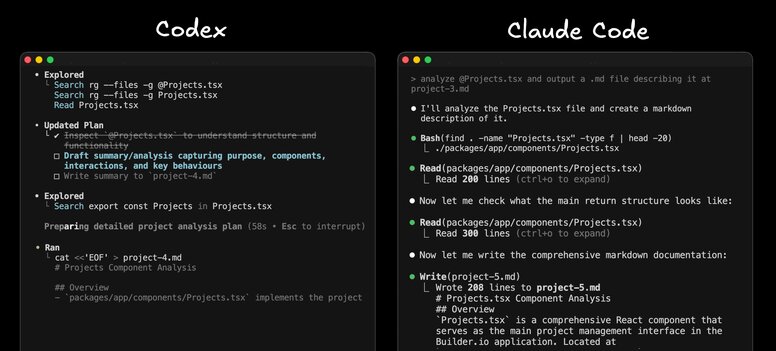

Codex also lets me pick low, medium, high, or even minimal reasoning for super fast runs. I like these options more than having just two model choices in Claude Code. Cursor on the other hand has a lot of options, which is cool in theory, but can be a bit overwhelming in practice.

I like that the same company making the tool is training the models as they should know how to use it best and be able to give me the best price as there is no middleman that also needs a margin.

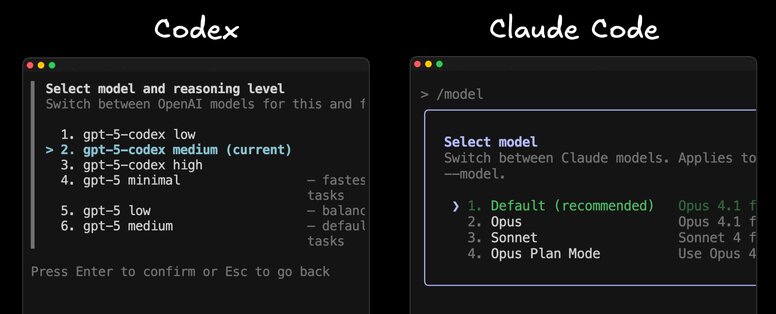

When we measured sentiment of Builder.io users using GPT-5 Codex, GPT-5 Mini, and Claude Sonnet, they rated GPT-5 Codex 40% higher on average.

Ultimately, different people prefer different models, so we'll just call this a tie.

Winner: Tie

Codex comes with standard ChatGPT plans. Claude Code comes with standard Claude plans.

On the surface, pricing tiers look similar: free, the roughly twenty-dollar tier, and the higher one hundred to two hundred tiers.

The key thing: GPT-5 Codex models are significantly more efficient under the hood than Claude Sonnet, and especially older Opus versions. In recent production usage, quality feels comparable by most anecdotes and public benchmarks, and GPT-5 Codex costs roughly half of Sonnet. The 10x Opus comparison has gotten more complicated now that Anthropic has shipped Opus 4.5 and 4.6 with meaningful efficiency improvements — but Codex is still the better value at most tiers.

Providers are not always explicit about exact request and token counts per plan, but in my experience Codex seems more generous.

Many more people can live comfortably on the 20-dollar Codex plan than on Claude’s 17-dollar plan, where limits get hit quickly. Even on the 100 and 200 tiers for Claude, heavy users still bump ceilings. With Codex Pro, I almost never hear about users hitting limits.

Also important to note that these are not just “coding plans.” You also get ChatGPT or Claude Chat. With ChatGPT you also get one of the best image generation models and video generation models, plus generally more polished products like the ChatGPT desktop app I use daily.

Claude’s desktop app feels slower and more like a basic Electron wrapper. Claude does have better MCP integrations with many one-click connectors. Day to day though, I am a ChatGPT user.

Given that the number one complaint I hear about coding agents is running out of credits, Codex has a real edge.

Winner: Codex

Codex recognizes a git-tracked repo and is permissive by default. Nice.

Claude Code’s permission system used to drive me crazy — I’d routinely launch it with --dangerously-skip-permissions. Anthropic has shipped meaningful improvements since then; the friction has narrowed, though settings still don’t fully persist between sessions in all workflows. Claude Code has also added a VS Code extension, a web IDE for remote control, parallel sessions in the desktop app, and a Cowork feature that extends it beyond just coding.

Terminal UIs are fine in both. Claude Code’s terminal UI is a touch nicer and clearly more mature, and you can have more control over permissions ultimately.

So while I think this is pretty neck and neck, I give the edge to Claude Code on UX overall.

Winner: Claude Code

Claude Code has a strong feature set: sub-agents, custom hooks, slash commands, and lots of configuration. For a deep dive, see my best tips here. Cursor has a good bit of features as well (see my Cursor tips here). Codex has caught up significantly — it now has a full IDE extension, a web app, Slack integration (you can mention @Codex directly in a Slack thread), multi-agent v2 support, image inputs (screenshots, wireframes, diagrams), and a Codex SDK. Claude Code still wins on configuration depth and hooks, but the "Codex is limited" narrative is outdated.

Claude Code has all the slash commands

Where Codex also shines is that it's open source, so you can customize it any way you like or learn from it to develop your own agent.

But here is my honest take. Cursor once asked me what features they should add to win me back from Claude Code. I had learned from Claude Code that I do not care about features. I want the best agent, clear prompts, and reliable delivery. So I do not miss features when I do not have them. I need an agent and a good instructions file. That is it.

But all that said, if you want a lot of features (including some that are definitely pretty useful), Claude wins.

Winner: Claude Code

I have a whole separate video and post on writing a good Agents.md. One thing that annoys me about Claude Code is it does not support the Agents.md standard, only Claude.md.

Tools like Cursor, Codex, and Builder.io all support Agents.md. It is annoying to maintain a separate file for Claude when everything else respects the standard.

Winner: Codex

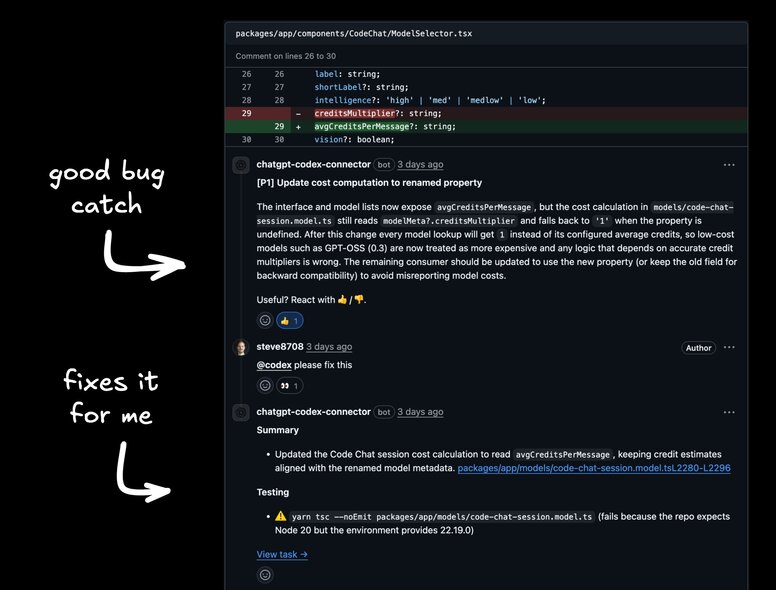

This is the main reason I prefer Codex.

We tried Claude Code’s GitHub integration briefly. The Builder.io dev team thought it sucked. Reviews were verbose without catching obvious bugs. You could not comment and ask it to fix things in a useful way. It just did not deliver value.

Codex’s GitHub app has been the opposite. Install it, turn on auto code review per repo, and it actually finds legitimate, hard-to-spot bugs. It comments inline, you can ask it to fix issues, it works in the background, and it lets you review and update the PR right there, then merge.

You can also mention @Codex directly on GitHub Issues and PRs — not just during code review, but in any issue thread. Find a bug, tag @Codex, and it picks it up. That makes the loop from discovery to fix feel seamless.

Crucially, the feel matches my terminal experience. The prompts that work in CLI work from the GitHub UI. Same model, same configuration, same behaviors. That consistency matters.

Runner-up here is Cursor’s Bugbot. It finds good bugs and offers useful “fix in web” or “fix in Cursor” paths. You cannot really go wrong with either. I still prefer Codex for the pricing, model integration, and consistency with my CLI flow.

Winner: Codex

Builder.io has Codex built in, which gives the whole team — not just terminal users — a way to ship with AI. If you need to iterate on visuals, you can do it quickly and include designers and PMs in the loop.

In 2025, I do not like handoffs. Our designers jump into Builder.io and use Codex to update sites and apps through prompting and a Figma-like visual editor. When they are ready, they send PRs. We review and merge.

Best part: everyone builds on the same foundation and codebase, using the same models and the same Agents.md. Designers, PMs, and engineers all align.

My personal winner right now is Codex. I use it daily. I like it a lot. The GitHub integration is excellent, the pricing and limits are favorable, the model options fit how I work, and the end-to-end consistency matters.

But to be completely honest, these days you really can’t go wrong with any of these options. Claude Code in particular has closed a lot of the gap since this article was first published — better UX, a VS Code extension, a web IDE, and a more polished desktop app. If you prefer Claude Code or Cursor, I completely respect that.

Have you tried the Codex CLI or background agents and PR bot? How did it go for you? Drop your experience and tips in the comments.

Is Codex still better than Claude Code in 2026?

Codex still wins on GitHub integration and pricing. But Claude Code has closed the gap significantly — better UX, a VS Code extension, a web IDE, and Cowork mode. Which is better depends on your workflow. If you live in the terminal and care about GitHub automation, Codex. If you want more configuration options and hooks, Claude Code.

Which should I use if I’m on a budget?

Codex Pro is the better value. More usage at lower cost tiers, and you also get ChatGPT, image generation, and video generation bundled in — not just a coding plan.

Can I use both Codex and Claude Code together?

Yes, and many teams do. Use Codex for background GitHub tasks and Claude Code for terminal sessions where you want more slash commands and hooks.

What about Cursor — is it still relevant?

Yes. Cursor still wins on IDE experience and has the most familiar UX for developers coming from VS Code. Bugbot is solid for code review too. You can’t really go wrong with it.

Does Claude Code still not support AGENTS.md?

Correct as of this writing. Claude Code uses CLAUDE.md. If you want one instructions file that works across tools, use AGENTS.md for Codex, Cursor, and Builder.io, and keep a synced CLAUDE.md for Claude Code.

How does Builder.io fit into this?

Builder.io gives you a visual layer on top of these agents. Instead of everyone living in the terminal, designers and PMs can use the Figma-like editor with Codex or Claude Code under the hood. Everyone works on the same codebase, same models, same instructions file. PRs come out the other end.

Builder.io visually edits code, uses your design system, and sends pull requests.

Builder.io visually edits code, uses your design system, and sends pull requests.

Connect a Repo

Connect a Repo